Shares of chipmaker Micron Technology, Inc. (MU) are rising more than 7% Thursday morning after reporting narrower loss in the first quarter than expected by

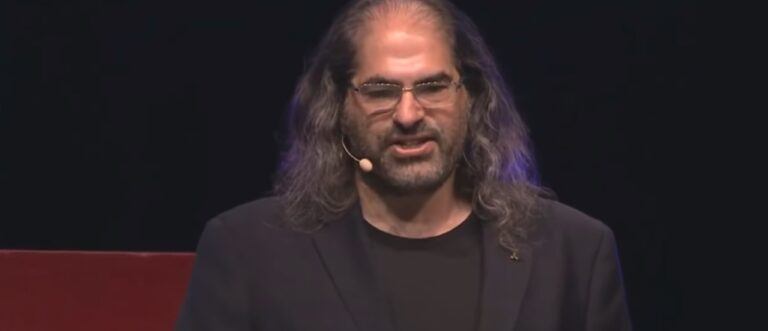

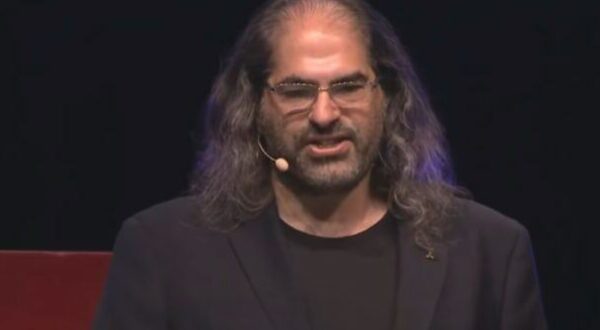

XRP Ledger to Lead Real World Asset Tokenization in 2024, Says Ripple CTO

On December 20, 2023, Ripple’s Chief Technology Officer, David Schwartz, shared his

Continue reading »